Apple did not exhibit at last week’s 2019 CES tech conference in Las Vegas, but it was nevertheless determined to a make statement.

On a large billboard near the exhibition, echoing the famous slogan “What happens in Vegas, stays in Vegas,†CES attendees were informed that “What happens on your iPhone stays on your iPhone.â€

Apple never shows up at CES, so I can’t say I saw this coming. pic.twitter.com/8jjiBSEu7z

— Chris Velazco (@chrisvelazco) January 4, 2019

With digital privacy becoming one of the most important issues of our time, this bold statement is certainly likely to press the right buttons for many people. But is it true?

The billboard also included a link to Apple’s Privacy page, which outlines various ways in which its iDevices are designed to protect users’ privacy. The features listed on the page are indeed impressively privacy-friendly. There is no doubt that “out-of-the-box,†iPhones are more secure and privacy-friendly than Android devices.

Trust

iOS is closed source

iOS uses 100% closed source proprietary software. This means that there is no way for anybody to independently check that Apple is doing what it says it is doing. In other words, we must simply trust Apple.

Can we trust Apple though? It’s a reputable US company with more than 1.3 billion products in active use worldwide. That seems trustworthy.

PRISM

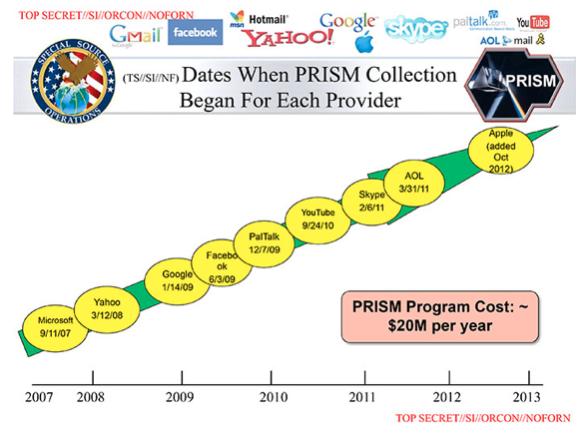

In 2013, National Security Agency (NSA) contractor Edward Snowden released a huge trove of highly sensitive documents to the world’s press.

One of the most startling revelations contained in these documents was that all the top US tech companies colluded with the NSA to provide it with indiscriminate access to their users’ intimate data (irrelevant of whether they were suspected of any wrong-doing).

This operation was code-named PRISM, and although the last of the big names to join up, Apple did so in the end.

End-to-end encryption

Faced with a potentially catastrophic loss of confidence from its users when this information became publically available, Apple has since struck an aggressively anti-government and pro-privacy stance.

In 2014 it infuriated then-FBI director James Comey by implementing end-to-end (e2e) encryption on its phones. This meant that Apple could not (and still no longer can) access users’ data on its devices, as only the user holds the encryption keys.

The resulting conflict came to a dramatic head in early 2016 when the FBI ordered Apple to develop a special version of iOS aimed at helping the FBI decrypt an iPhone 5C that belonged to the married couple responsible for the 2015 San Bernardino terrorist attack. Apple refused.

Whether or not this undoubtedly brave stand is enough restore trust in the company after the PRISM betrayal is for you to decide. But there are a couple of additional points worth considering…

The feds got the data anyway

The stand-off was finally only resolved by the FBI hiring Celebrite, an Israeli professional hacking company, to extract the data without Apple’s co-operation. Which it did.

Celebrite has also now provided US law enforcement with the tools necessary to unlock every iPhone ever made (at last report, although this is no-doubt an ongoing arms-race between Apple and Celebrite).

So no matter how trustworthy Apple is, or how much it big-ups its latest security systems, the feds can (probably) access the data on your iPhone if they want it badly enough anyway.

iCloud

By default, what happens on your iPhone does not, in fact, stay on your iPhone. It gets automatically uploaded to iCloud.

While data stored on your phone is securely e2e encrypted so that Apple itself cannot access it, this is not true of data stored online using iCloud. Which is enabled by default on most users’ phones.

Apple has been working on stronger e2e iCloud backup encryption for quite some time now, but has yet to showcase it. This means that Apple still holds the encryption keys protecting your backed-up content, and can access it if it so chooses (or is forced to).

If Apple can access your data, then so can others. iCloud relies on exactly the same cloud-based network architecture that its rivals do, and as the repeated drumroll of data breeches that hit the news almost every day shows, there is no way to guarantee that data stored anywhere in the cloud is safe from hackers.

It is worth stressing that iMessages backed up to iCloud are similarly vulnerable, but that is not the only problem with the app…

iMessage is not secure

In 2016, crypto-researchers at Johns Hopkins University discovered that Apple’s iMessage app contained a fundamental flaw that cannot be permanently fixed. This flaw could allow adversaries to decrypt certain iMessages and attachments under certain circumstances.

Apple was quick to rush out a quick (but weak) patch which solved the problem in the short term, but a permanent solution would require completely rewriting the existing iMessage architecture. Which Apple has not done. Nor has it followed the researchers’ recommendation to switch to the highly secure Signal protocol.

Rogue Apps

Thanks to its vigorous vetting process, the Apple Store is home to far fewer malicious apps than the Google Play Store is. But they exist, and according to a recent report in the New York Times, they send “anonymous, precise location data†to companies, enabling them to track users in worrying detail.

So does what happens on your iPhone stay on your iPhone?

By pulling stunts such as the one in Las Vegas, Apple is inviting close inspection of the iPhone’s privacy capabilities. Which is arguably not a very good idea.

As already noted, “out-of-the-box†iPhones are much more private and secure than their Android alternatives. But Android phones can be rooted and flashed with open source ROMs such as LineageOS and Copperhead, making them much more private and secure than any iDevice will ever be…

By Douglas Crawford

BestVPN.com.